Package Exports

- open-model-selector

- open-model-selector/styles.css

- open-model-selector/utils

Readme

open-model-selector

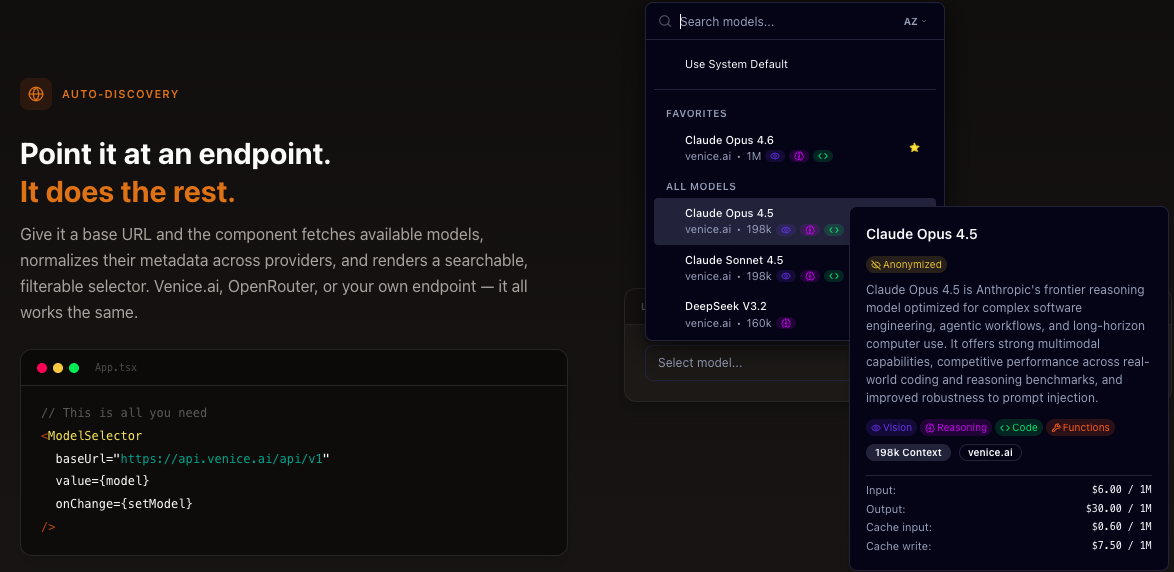

An accessible, themeable React model-selector combobox for any OpenAI-compatible API — with first-class Venice.ai support.

Drop it into your app to let users search, filter, and pick from Venice's frontier model catalog (GPT-5.2, Claude Opus 4.6, Gemini 3 Pro, GLM 4.7, Qwen 3 Coder 480B, and more) or any other

/v1/modelsendpoint.

Table of Contents

- Features

- Installation

- Quick Start

- API Reference

- TypeScript

- Customization

- Framework Integration

- Development

- License

- Links

Features

- First-class Venice.ai support — full normalizer for Venice's rich

model_specformat including capabilities, privacy levels, traits, and per-type pricing across 60+ models - Any OpenAI-compatible endpoint — also works out of the box with OpenAI, OpenRouter, and more

- Accessible combobox built on cmdk + Radix Popover

- Managed or controlled mode — auto-fetch models from an API, or pass them in directly

- Fuzzy search by name, provider, ID, and description

- Favorites with

localStoragepersistence or external state control - Sorting by name (A–Z) or newest

- "Use System Default" sentinel option for fallback behavior

- 8 model types: text, image, video, inpaint, embedding, TTS, ASR, upscale

- Specialized selectors:

<TextModelSelector>,<ImageModelSelector>,<VideoModelSelector> - Built-in normalizers for Venice.ai, OpenAI, OpenRouter, Together AI, Vercel AI Gateway, Mistral, Groq, Cerebras, Nvidia NIM, DeepSeek, SambaNova, and Helicone response shapes

- Scoped CSS with

--oms-custom property prefix — never pollutes host styles - Dark mode via

prefers-color-schemeand.darkclass - Full TypeScript types exported

- Dual CJS/ESM output with sourcemaps

- React 18 and 19 support

Check out the demo here!

Installation

Install the package

npm install open-model-selectoryarn add open-model-selectorpnpm add open-model-selectorPeer dependencies

The following peer dependencies are required and must be installed separately:

| Package | Version |

|---|---|

react |

^18.0.0 || ^19.0.0 |

react-dom |

^18.0.0 || ^19.0.0 |

@radix-ui/react-popover |

^1.0.0 |

cmdk |

^1.0.0 |

Install the non-React peer dependencies (React and React DOM are typically already in your project):

# npm

npm install @radix-ui/react-popover cmdk

# yarn

yarn add @radix-ui/react-popover cmdk

# pnpm

pnpm add @radix-ui/react-popover cmdkReact 18 users: React 18 does not bundle its own TypeScript types. If you're using TypeScript with React 18, ensure

@types/reactand@types/react-domare installed in your project.

CSS import

You must import the stylesheet for the component to render correctly:

import "open-model-selector/styles.css";Import this once in your app's entry point or layout component.

Node.js requirement

Node.js >=18.0.0 is required.

Quick Start

Managed Mode (API Fetch)

The simplest way to use the component — point it at Venice.ai (or any OpenAI-compatible endpoint) and it handles the rest:

import { useState } from "react"

import { ModelSelector } from "open-model-selector"

import type { AnyModel } from "open-model-selector"

import "open-model-selector/styles.css"

function App() {

const [modelId, setModelId] = useState<string>("")

const [selectedModel, setSelectedModel] = useState<AnyModel | null>(null)

return (

<ModelSelector

baseUrl="https://api.venice.ai/api/v1"

apiKey="your-api-key"

value={modelId}

onChange={(id, model) => {

setModelId(id)

setSelectedModel(model) // full model object, or null for system default

}}

/>

)

}The

onChangecallback receives the model ID and the full model object (ornullwhen "Use System Default" is selected). This eliminates the need for a secondary lookup. The component fetches from{baseUrl}/modelsand normalizes responses automatically. Venice.ai's richmodel_specformat is fully supported — capabilities, privacy levels, traits, pricing, and deprecation info are all extracted out of the box.

Controlled Mode (Static Models)

Pass models directly when you already have them or need full control over the list. Here's an example using Venice.ai's frontier models:

import { useState } from "react"

import { ModelSelector } from "open-model-selector"

import type { TextModel } from "open-model-selector"

import "open-model-selector/styles.css"

const models: TextModel[] = [

{

id: "zai-org-glm-4.7",

name: "GLM 4.7",

provider: "venice.ai",

type: "text",

created: 1766534400,

is_favorite: false,

context_length: 198000,

capabilities: {

supportsFunctionCalling: true,

supportsReasoning: true,

supportsWebSearch: true,

},

pricing: { prompt: 0.00000055, completion: 0.00000265 },

},

{

id: "qwen3-coder-480b-a35b-instruct",

name: "Qwen 3 Coder 480B",

provider: "venice.ai",

type: "text",

created: 1745903059,

is_favorite: false,

context_length: 256000,

capabilities: {

optimizedForCode: true,

supportsFunctionCalling: true,

supportsWebSearch: true,

},

pricing: { prompt: 0.00000075, completion: 0.000003 },

},

{

id: "claude-opus-4-6",

name: "Claude Opus 4.6",

provider: "venice.ai",

type: "text",

created: 1770249600,

is_favorite: false,

context_length: 1000000,

capabilities: {

optimizedForCode: true,

supportsFunctionCalling: true,

supportsReasoning: true,

supportsVision: true,

},

pricing: { prompt: 0.000006, completion: 0.00003 },

},

]

function App() {

const [modelId, setModelId] = useState<string>("zai-org-glm-4.7")

return (

<ModelSelector

models={models}

value={modelId}

onChange={(id) => setModelId(id)}

placeholder="Choose a model..."

/>

)

}When a non-empty

modelsarray is provided, the internal API fetch is disabled. Venice.ai offers 60+ models across text, image, video, embedding, TTS, ASR, and more — all accessible via a single API key at venice.ai.

Specialized Selectors

Type-filtered convenience components for common model categories:

import { useState } from "react"

import { TextModelSelector, ImageModelSelector, VideoModelSelector } from "open-model-selector"

import "open-model-selector/styles.css" // Required — same stylesheet as <ModelSelector>

function App() {

const [model, setModel] = useState("")

return (

<>

{/* These are pre-filtered wrappers around <ModelSelector type="..."> */}

<TextModelSelector baseUrl="https://api.venice.ai/api/v1" value={model} onChange={(id) => setModel(id)} />

<ImageModelSelector baseUrl="https://api.venice.ai/api/v1" value={model} onChange={(id) => setModel(id)} />

<VideoModelSelector baseUrl="https://api.venice.ai/api/v1" value={model} onChange={(id) => setModel(id)} />

</>

)

}These are convenience wrappers that pass

type="text",type="image", ortype="video"respectively. All three forwardrefto the root<div>, just like<ModelSelector>.

API Reference

<ModelSelector>

The primary component. Renders an accessible combobox popover for searching and selecting models.

The component forwards ref to the root <div>.

| Prop | Type | Default | Description |

|---|---|---|---|

models |

AnyModel[] |

[] |

Static list of models. When non-empty, disables internal API fetch. |

baseUrl |

string |

— | Base URL for the OpenAI-compatible API (e.g., "https://api.venice.ai/api/v1"). |

apiKey |

string |

— | API key for authentication. ⚠️ Visible in browser DevTools — use a backend proxy in production. |

type |

ModelType |

— | Filter to a specific model type ("text", "image", "video", etc.). |

queryParams |

Record<string, string> |

{} |

Query parameters appended to the /models URL as a query string. See Query Parameters below. |

fetcher |

(url: string, init?: RequestInit) => Promise<Response> |

fetch |

Custom fetch function for SSR, proxies, or testing. |

responseExtractor |

ResponseExtractor |

defaultResponseExtractor |

Custom function to extract the model array from the API response. |

normalizer |

ModelNormalizer |

defaultModelNormalizer |

Custom function to normalize each raw model object into an AnyModel. |

value |

string |

— | Currently selected model ID (controlled). |

onChange |

(modelId: string, model: AnyModel | null) => void |

— | Callback when a model is selected. Receives the model ID and the full model object (or null for the system-default sentinel). If omitted, a dev-mode warning is logged. |

onToggleFavorite |

(modelId: string) => void |

— | Callback for favorite toggle. If omitted, favorites use localStorage. |

placeholder |

string |

"Select model..." |

Placeholder text when no model is selected. |

sortOrder |

"name" | "created" |

— | Controlled sort order. If omitted, internal state is used. |

onSortChange |

(order: "name" | "created") => void |

— | Callback when sort changes. |

side |

"top" | "bottom" | "left" | "right" |

"bottom" |

Popover placement relative to the trigger. |

className |

string |

— | Additional CSS class(es) for the root element. |

storageKey |

string |

"open-model-selector-favorites" |

localStorage key for persisting favorites (uncontrolled mode). |

showSystemDefault |

boolean |

true |

Whether to show the "Use System Default" option. |

showDeprecated |

boolean |

true |

Whether to show deprecated models. When false, past-date deprecated models are hidden. |

disabled |

boolean |

false |

When true, prevents opening the selector and dims the trigger button. |

The library exports a sentinel constant for the system default option:

import { SYSTEM_DEFAULT_VALUE } from "open-model-selector"

// SYSTEM_DEFAULT_VALUE === "system_default"useModels Hook

Fetches and normalizes models from an OpenAI-compatible API endpoint. Used internally by <ModelSelector> but available for building custom UIs on top of Venice.ai or any other provider.

import { useModels } from "open-model-selector"

const { models, loading, error } = useModels({

baseUrl: "https://api.venice.ai/api/v1",

apiKey: "your-key",

type: "text",

})Props (UseModelsProps)

| Prop | Type | Default | Description |

|---|---|---|---|

baseUrl |

string |

— | Base URL for the API. If omitted, no fetch occurs. |

apiKey |

string |

— | Bearer token for authentication. |

type |

ModelType |

— | Client-side filter by model type. |

queryParams |

Record<string, string> |

{} |

Query parameters appended to the /models URL. See Query Parameters below. |

fetcher |

(url: string, init?: RequestInit) => Promise<Response> |

fetch |

Custom fetch function. |

responseExtractor |

ResponseExtractor |

defaultResponseExtractor |

Extracts model array from response JSON. |

normalizer |

ModelNormalizer |

defaultModelNormalizer |

Normalizes each raw model into AnyModel. |

Return Value (UseModelsResult)

| Field | Type | Description |

|---|---|---|

models |

AnyModel[] |

Normalized model array. |

loading |

boolean |

true while fetching. |

error |

Error | null |

Fetch or normalization error, if any. |

Notes:

- Automatically cleans up with

AbortControlleron unmount- Re-fetches when

baseUrl,apiKey, orqueryParamschangefetcher,responseExtractor, andnormalizerare stored in refs (no memoization needed)

⚠️ Stability:

apiKeyandbaseUrlare used as React effect dependencies. Ensure these are stable values (string constants, state variables, oruseMemo'd values). Passing an unstable reference (e.g.,apiKey={computeKey()}) will cause infinite re-fetching.

Query Parameters

The queryParams prop is appended to the /models endpoint URL as query string parameters (e.g., queryParams={{ type: 'text' }} fetches from /models?type=text).

Venice.ai users: Venice's /models endpoint supports a type parameter to filter models server-side. Pass the model type you need:

// Fetch only text models from Venice

<ModelSelector

baseUrl="https://api.venice.ai/api/v1"

queryParams={{ type: 'text' }}

value={model}

onChange={(id) => setModel(id)}

/>

// Fetch all model types from Venice

<ModelSelector

baseUrl="https://api.venice.ai/api/v1"

queryParams={{ type: 'all' }}

value={model}

onChange={(id) => setModel(id)}

/>Venice supports

typevalues:"text","image","video","embedding","tts","asr","upscale","inpaint", and"all".

Other APIs: Pass whatever query parameters your endpoint expects, or omit queryParams entirely if none are needed.

Performance tip: The

typeprop (e.g.,type="text") filters client-side — all models are fetched first, then filtered in the browser. For large catalogs, usequeryParamsto filter server-side at the API level, reducing payload size and parse time. You can combine both:queryParamsfor server-side pre-filtering andtypeas a client-side safety net.

Model Types

The library supports 8 model types, each with its own TypeScript interface extending BaseModel:

| Type | Interface | Key Fields |

|---|---|---|

"text" |

TextModel |

pricing.prompt, pricing.completion, pricing.cache_input, pricing.cache_write, context_length, capabilities, constraints.temperature, constraints.top_p |

"image" |

ImageModel |

pricing.generation, pricing.resolutions, constraints.aspectRatios, constraints.resolutions, supportsWebSearch |

"video" |

VideoModel |

constraints.resolutions, constraints.durations, constraints.aspect_ratios, model_sets |

"inpaint" |

InpaintModel |

pricing.generation, constraints.aspectRatios, constraints.combineImages |

"embedding" |

EmbeddingModel |

pricing.input, pricing.output |

"tts" |

TtsModel |

pricing.input, voices |

"asr" |

AsrModel |

pricing.per_audio_second |

"upscale" |

UpscaleModel |

pricing.generation |

The union type AnyModel represents any of the above.

The BaseModel interface includes these fields shared by all types:

id: stringname: stringprovider: stringcreated: number(Unix timestamp)type: ModelTypedescription?: stringprivacy?: "private" | "anonymized"is_favorite?: booleanoffline?: booleanbetaModel?: booleanmodelSource?: stringtraits?: string[]deprecation?: { date: string }

import type { TextModel, ImageModel, AnyModel, ModelType } from "open-model-selector"The library also exports prop types for each specialized selector:

import type {

ModelSelectorProps,

TextModelSelectorProps,

ImageModelSelectorProps,

VideoModelSelectorProps,

} from "open-model-selector"

TextModelSelectorProps,ImageModelSelectorProps, andVideoModelSelectorPropsare eachOmit<ModelSelectorProps, 'type'>— identical toModelSelectorPropswith thetypeprop removed.

TypeScript

Full .d.ts type declarations are included in the package — no separate @types/ install is needed.

All Exported Types

// Component props

import type {

ModelSelectorProps,

TextModelSelectorProps,

ImageModelSelectorProps,

VideoModelSelectorProps,

} from "open-model-selector"

// Hook types

import type { UseModelsProps, UseModelsResult, FetchFn } from "open-model-selector"

// Base model types

import type { ModelType, BaseModel, Deprecation, AnyModel } from "open-model-selector"

// Text model types

import type { TextModel, TextPricing, TextCapabilities, TextConstraints } from "open-model-selector"

// Image model types

import type { ImageModel, ImagePricing, ImageConstraints } from "open-model-selector"

// Video model types

import type { VideoModel, VideoConstraints } from "open-model-selector"

// Other model types

import type { InpaintModel, InpaintPricing, InpaintConstraints } from "open-model-selector"

import type { EmbeddingModel, EmbeddingPricing } from "open-model-selector"

import type { TtsModel, TtsPricing } from "open-model-selector"

import type { AsrModel, AsrPricing } from "open-model-selector"

import type { UpscaleModel, UpscalePricing } from "open-model-selector"

// Normalizer types (also available from "open-model-selector/utils")

import type { ModelNormalizer, ResponseExtractor } from "open-model-selector"Tip:

import typestatements are erased at compile time and are always safe in React Server Components, regardless of"use client"directives.

Customization

Custom Normalizer

The built-in defaultModelNormalizer handles response shapes from Venice.ai, OpenAI, OpenRouter, Together AI, Vercel AI Gateway, Mistral, Groq, Cerebras, Nvidia NIM, DeepSeek, SambaNova, and Helicone automatically — using a 6-tier type classification strategy that resolves provider-specific vocabulary, architecture metadata, and model ID heuristics. Venice's nested model_spec format with capabilities, privacy, traits, and deprecation info is fully supported. If your API returns a different shape, provide a custom normalizer:

import { ModelSelector } from "open-model-selector"

import type { ModelNormalizer, AnyModel } from "open-model-selector"

const myNormalizer: ModelNormalizer = (raw): AnyModel => ({

id: raw.model_id as string,

name: raw.display_name as string,

provider: raw.org as string,

type: "text",

created: Date.now() / 1000,

is_favorite: false,

context_length: Number(raw.max_tokens) || 128000,

pricing: {

prompt: Number(raw.cost_per_input_token),

completion: Number(raw.cost_per_output_token),

},

})

<ModelSelector

baseUrl="https://my-api.com/v1"

normalizer={myNormalizer}

value={model}

onChange={(id) => setModel(id)}

/>You can also compose with the built-in

extractBaseFieldshelper for shared fields. The normalizer is stored in a ref — nouseCallbackwrapper needed.

Custom Response Extractor

The built-in defaultResponseExtractor handles { data: [...] }, { models: [...] }, and top-level arrays. For non-standard response shapes, provide a custom extractor:

import type { ResponseExtractor } from "open-model-selector"

const myExtractor: ResponseExtractor = (body) => {

// Your API returns { results: { items: [...] } }

const results = (body as any).results

return results?.items ?? []

}

<ModelSelector

baseUrl="https://my-api.com/v1"

responseExtractor={myExtractor}

value={model}

onChange={(id) => setModel(id)}

/>Styling and Theming

All CSS variables are scoped under .oms-reset and use the --oms- prefix — they never pollute :root or the host app.

Overriding variables:

.oms-reset {

--oms-primary: 210 100% 50%;

--oms-radius: 0.75rem;

--oms-popover-width: 400px;

}Key CSS variables:

| Variable | Default (Light) | Purpose |

|---|---|---|

--oms-background |

0 0% 100% |

Background color |

--oms-foreground |

222.2 84% 4.9% |

Text color |

--oms-primary |

222.2 47.4% 11.2% |

Primary/action color |

--oms-accent |

210 40% 96.1% |

Hover/selection highlight |

--oms-muted-foreground |

215.4 16.3% 46.9% |

Subdued text |

--oms-border |

214.3 31.8% 91.4% |

Border color |

--oms-destructive |

0 84.2% 60.2% |

Error/destructive color |

--oms-radius |

0.5rem |

Border radius |

--oms-popover-width |

300px |

Popover width |

Important: CSS variable values must be space-separated HSL triplets (e.g.,

220 14% 96%), not hex, rgb, or named colors. The component uses these values insidehsl()wrappers internally, following the Shadcn/ui convention. This allows alpha composition:hsl(var(--oms-accent) / 0.5)./* ✅ Correct */ .my-theme .oms-popover-content { --oms-background: 220 14% 96%; } /* ❌ Incorrect — will break styling */ .my-theme .oms-popover-content { --oms-background: #f0f0f0; --oms-background: rgb(240, 240, 240); --oms-background: white; }

Dark mode:

The component supports two dark mode strategies:

- Automatic —

@media (prefers-color-scheme: dark)works out of the box with no configuration. - Class-based — Add a

.darkclass to any ancestor element. Compatible with Tailwind'sdarkMode: "class".

<!-- Automatic: just works with OS dark mode -->

<ModelSelector ... />

<!-- Manual: add .dark class to any ancestor -->

<div class="dark">

<ModelSelector ... />

</div>Portal-safe — the dark theme is also applied to popover and tooltip content that renders outside the component tree via Radix portals.

Format Utilities

The open-model-selector/utils entry point exports format utilities without the "use client" directive, making them safe for React Server Components:

import {

formatPrice,

formatContextLength,

formatFlatPrice,

formatAudioPrice,

formatDuration,

formatResolutions,

formatAspectRatios,

} from "open-model-selector/utils"Usage examples:

formatPrice("0.000003") // "$3.00" — per-token price × 1,000,000

formatPrice(0.00003) // "$30.00" — works with numbers too

formatPrice(0) // "Free"

formatPrice(undefined) // "—"

formatContextLength(128000) // "128k"

formatFlatPrice(0.04) // "$0.04"

formatAudioPrice(0.006) // "$0.0060 / sec"

formatDuration(["5", "10"]) // "5s – 10s"

formatResolutions(["720p", "1080p", "4K"]) // "720p, 1080p, 4K"

formatAspectRatios(["1:1", "16:9", "4:3"]) // "1:1, 16:9, 4:3"Note:

formatPriceexpects the raw per-token price (as returned by OpenAI, Venice.ai, OpenRouter, etc.) and converts it to a per-million-token display value by multiplying by 1,000,000. For example, a per-token cost of0.000003becomes$3.00per million tokens. Very small values (< $0.01/M) use 6 decimal places to preserve precision.

Normalizer Utilities

In addition to defaultModelNormalizer and defaultResponseExtractor, the library exports the individual per-type normalizers, type inference helpers, and low-level building blocks. These are available from both "open-model-selector" and "open-model-selector/utils".

Per-Type Normalizers

Each model type has a dedicated normalizer that converts a raw API response object into the corresponding typed model:

import {

normalizeTextModel,

normalizeImageModel,

normalizeVideoModel,

normalizeInpaintModel,

normalizeEmbeddingModel,

normalizeTtsModel,

normalizeAsrModel,

normalizeUpscaleModel,

} from "open-model-selector/utils"

// Each accepts a raw object and returns the typed model

const textModel = normalizeTextModel(rawApiObject) // → TextModel

const imageModel = normalizeImageModel(rawApiObject) // → ImageModelThese are the same functions used internally by

defaultModelNormalizer. Use them directly when you need to normalize models of a known type without the dispatching logic.

| Function | Returns |

|---|---|

normalizeTextModel(raw) |

TextModel |

normalizeImageModel(raw) |

ImageModel |

normalizeVideoModel(raw) |

VideoModel |

normalizeInpaintModel(raw) |

InpaintModel |

normalizeEmbeddingModel(raw) |

EmbeddingModel |

normalizeTtsModel(raw) |

TtsModel |

normalizeAsrModel(raw) |

AsrModel |

normalizeUpscaleModel(raw) |

UpscaleModel |

Type Inference

defaultModelNormalizer uses a 6-tier type resolution strategy to correctly classify models across providers with different metadata formats:

| Tier | Strategy | Providers |

|---|---|---|

| 1 | Direct match against canonical types (text, image, etc.) |

Venice |

| 2 | Non-text alias mapping (e.g. "audio"→"tts", "transcribe"→"asr") |

Together AI |

| 3 | Architecture-based inference from output_modalities |

OpenRouter |

| 4 | Heuristic pattern matching on model ID | OpenAI, Nvidia, Helicone |

| 5 | Text-aliased type (e.g. "chat"→"text", "base"→"text") |

Together AI, Mistral, Vercel |

| 6 | Default to "text" |

All |

Text aliases (Tier 5) are checked after the ID heuristic (Tier 4) so that e.g. Mistral's

mistral-embed(type:"base") still gets classified as"embedding"via its model ID rather than being swallowed by the"base"→"text"alias.

TYPE_ALIASES maps provider-specific type vocabulary to canonical ModelType values:

import { TYPE_ALIASES } from "open-model-selector/utils"

// TYPE_ALIASES = {

// chat: 'text', // Together AI

// language: 'text', // Vercel AI Gateway, Together AI

// base: 'text', // Mistral AI

// moderation: 'text', // Together AI

// rerank: 'text', // Together AI

// audio: 'tts', // Together AI (Cartesia Sonic etc.)

// transcribe: 'asr', // Together AI (Whisper etc.)

// }inferTypeFromId uses heuristic pattern matching on a model's ID string (Tier 4). This is how defaultModelNormalizer classifies models from providers that don't include an explicit type field (e.g., OpenAI, Nvidia):

import { inferTypeFromId, MODEL_ID_TYPE_PATTERNS } from "open-model-selector/utils"

inferTypeFromId("dall-e-3") // "image"

inferTypeFromId("gpt-image-1") // "image"

inferTypeFromId("whisper-large-v3") // "asr"

inferTypeFromId("gpt-4o") // undefined (no match → caller defaults to "text")MODEL_ID_TYPE_PATTERNS is the Array<[RegExp, ModelType]> used internally. It's exported so you can inspect the built-in rules or extend them for custom providers.

Low-Level Helpers

| Function | Signature | Description |

|---|---|---|

extractBaseFields |

(raw, type) → Omit<BaseModel, 'type'> |

Extracts shared BaseModel fields from a raw API object. Handles both Venice's nested model_spec format and flat top-level fields. Useful when writing custom per-type normalizers. |

toNum |

(v: unknown) → number | undefined |

Safely coerces an unknown value to a number. Returns undefined for undefined, null, empty strings, and NaN. Used internally by all normalizers. |

Helper Utilities

These are exported from "open-model-selector/utils" (and also re-exported from the main entry):

import { isDeprecated } from "open-model-selector/utils"

// or equivalently:

import { isDeprecated } from "open-model-selector"| Function | Signature | Description |

|---|---|---|

isDeprecated |

(dateStr: string) → boolean |

Returns true if the given ISO 8601 date string is in the past. Handles date-only strings ("2025-01-15") by normalizing to UTC. Returns false for invalid dates. |

Framework Integration

"use client" & React Server Components

All component and hook modules include a "use client" directive — they are safe to import in Next.js App Router, Remix, and other RSC-aware frameworks without extra wrappers.

The open-model-selector/utils sub-path does not include "use client" and is safe to import directly in Server Components:

// ✅ Server Component — works fine

import { formatPrice, formatContextLength } from "open-model-selector/utils"

// ✅ Server Component — type-only imports are always safe

import type { TextModel, AnyModel } from "open-model-selector"If you need the components or useModels hook, import them in a Client Component (any file with "use client" at the top, or rendered inside one).

SSR Compatibility

The library is SSR-safe:

- An isomorphic

useLayoutEffectpattern is used internally, so there are no React warnings during server-side rendering. - Works with Next.js (App Router and Pages Router), Remix, Gatsby, and any other SSR framework.

- Tooltips render via a

createPortalcall — they require a DOM environment, but this is handled automatically since the portal only mounts after hydration.

Security

- API keys are sent as an

Authorization: Bearerheader, never as URL query parameters. - Error messages from failed requests are constructed from

response.statusandresponse.statusTextonly — user-supplied content is not interpolated into the DOM. - Client-side key exposure: Any

apiKeypassed as a prop is visible in browser DevTools. For production apps, use one of:- A server-side proxy that injects the key (pass a relative

baseUrland a customfetcher) - Environment variables scoped to the server (e.g.,

VENICE_API_KEY) with a thin API route - A scoped/read-only API key with minimal permissions

- A server-side proxy that injects the key (pass a relative

Recommended: Backend Proxy Pattern

Instead of passing apiKey directly, use a server-side proxy and the fetcher prop:

// Next.js API Route: app/api/models/route.ts

import { NextResponse } from 'next/server'

export async function GET() {

const res = await fetch('https://api.venice.ai/api/v1/models', {

headers: { Authorization: `Bearer ${process.env.VENICE_API_KEY}` },

})

return NextResponse.json(await res.json())

}// Client component

<ModelSelector

baseUrl="/api"

fetcher={(url, init) => fetch(url, init)}

onChange={(id) => console.log(id)}

/>This keeps your API key server-side and never exposes it to the browser.

Accessibility

The component implements the ARIA combobox pattern and is designed to work well with screen readers and keyboard-only navigation.

Combobox & Popover:

- Trigger button has

role="combobox"witharia-expanded,aria-haspopup="listbox", andaria-controlslinking to the listbox - Dynamic

aria-labelreflects the current selection state - Search input is labeled (

aria-label="Search models") - Loading state uses

role="status"witharia-live="polite"; errors userole="alert"

Keyboard Navigation (provided by cmdk and Radix Popover):

↑/↓— navigate the model listEnter— select the highlighted modelEscape— close the popover and return focus to the trigger- Type-ahead filtering via the search input (auto-focused on open)

Tooltips:

- Model detail tooltips use

role="tooltip"with a uniqueidlinked viaaria-describedbyon the trigger - Tooltips appear on both hover and keyboard focus (

onFocus/onBlur), and dismiss withEscape

Development

Scripts

| Command | Description |

|---|---|

npm run build |

Build with tsup (CJS + ESM + types + sourcemaps). Cleans dist/ first via prebuild. |

npm run dev |

Build in watch mode for development. |

npm run typecheck |

Run TypeScript type checking (tsc). |

npm test |

Run tests with Vitest. |

npm run test:watch |

Run tests in watch mode. |

npm run storybook |

Start Storybook dev server on port 6006. |

npm run build-storybook |

Build static Storybook site. |

node scripts/capture-provider-snapshots.cjs |

Capture real provider API responses into test-fixtures/providers/ for test fixture generation. Requires .env with API keys. |

npm run prepublishOnlyruns typecheck → test → build automatically before publishing.

Testing

The project uses a layered testing strategy:

- Unit tests: Vitest with jsdom environment

- Component tests:

@testing-library/react+@testing-library/user-event - Browser tests: Storybook + Playwright via

@storybook/addon-vitestand@vitest/browser-playwright

Test files:

src/components/model-selector.test.tsx— Component testssrc/hooks/use-models.test.tsx— Hook testssrc/utils/format.test.ts— Format utility testssrc/utils/normalizers/normalizers.test.ts— Normalizer testssrc/utils/normalizers/provider-compat.test.ts— Provider compatibility tests (Together AI, Vercel, Mistral, OpenRouter, OpenAI, Nvidia, Helicone, Venice)

# Run all tests

npm test

# Run tests in watch mode

npm run test:watchStorybook

The project includes comprehensive Storybook stories covering multiple scenarios: Default, PreselectedModel, SystemDefault, CustomPlaceholder, SortByNewest, ControlledFavorites, EmptyState, LoadingState, ErrorState, PopoverTop, WideContainer, DarkMode, MinimalModels, and VeniceLive.

Live provider stories are also available for testing against real APIs (OpenAI, OpenRouter, Together, Groq, Cerebras, Nvidia, Mistral, DeepSeek, SambaNova, Venice, Helicone, Vercel). To use them:

- Copy

.env.exampleto.envand fill in the API keys you want to test - Run

npm run storybook— the Storybook config auto-loads your.envkeys and configures CORS proxies

cp .env.example .env

# Edit .env with your API keys

npm run storybook

# Opens at http://localhost:6006Contributing

- Fork the repository

- Create a feature branch (

git checkout -b feature/my-feature) - Make your changes

- Run

npm run typecheck && npm testto verify - Commit and push

- Open a Pull Request

License

MIT — see LICENSE for details.